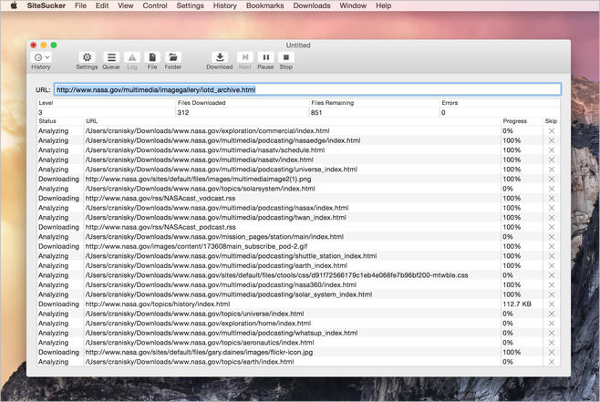

Something like SiteSucker makes a lot more sense than cloning a site for helping folks archive their work so that it can be accessible for the long term, and building that feature into Reclaim Hosting’s services would be pretty cool.

All those database driven sites need to be updated, maintained, and protected from hackers and spam. One option is cloning a site in Installatron on Reclaim Hosting, but that requires a dynamic database for a static copy, why not just suck that site? And while cloning a site using Installatron is cheaper and easier given it’s built into Reclaim offerings, it’s not all that sustainable for us or them. WGET is a piece of free software from GNU designed to retrieve files using the most popular inter. And to reinforce that point, right after I finished sucking this site, a faculty member submitted a support ticket asking the best way to archive a specific moment of a site so that they could compare it with future iterations. WGET latest version: Retrieve files using popular internet protocols for free. I can see more than a few uses for my own sites, not to mention the many others I help support. I don’t pay for that many applications, but this is one that was very much worth the $5 for me. Fixed a bug that could cause SiteSucker to crash if it needs to ask the user for permission to open a file. Version 2.7.2: Fixed a bug that could cause SiteSucker to crash on OS X 10.9.x Mavericks. SiteSucker can download files unmodified, or it can “localize” the files it downloads, allowing you to browse a site off-line. SiteSucker Pro is an enhanced version of SiteSucker that can download. It does this by asynchronously copying the site's webpages, images, PDFs, style sheets, and other files to your local hard drive, duplicating the site's directory structure. When it completes, dont install it yet Instead, browse to the executable, then right-click and select 7-Zip > Open archive > cab from the context. SiteSucker Pro 4.0.1 Multilingual macOS 6 mb SiteSucker is an Macintosh application that automatically downloads Web sites from the Internet. Download the Windows XP Mode virtual hard disk. press return, and SiteSucker can download an entire Web site. WGET latest version: Retrieve files using popular internet protocols for free. SiteSucker is a Macintosh application that automatically downloads Web sites from the.

HTTP features like compression, authentication, caching, user-agent spoofing, robots.HTTrack WebSite Copier allows you to download a web site from the Internet to a local directory, building recursively all directories, getting HTML, images, and other files from the server to your computer. * Wide range of built-in extensions and middlewares for handling: The following below are the steps to follow:-After finishing download file, Open to the download folders and check for your downloaded folder. Also download link for this and other Setup available here How To install checkra1n 0.12.4 windows. That you can install by following below Instructions. * Strong extensibility support, allowing you to plug in your own functionality using signals and a well-defined API (middlewares, extensions, and pipelines). After opening click download to get the folder. * Robust encoding support and auto-detection, for dealing with foreign, non-standard and broken encoding declarations. * Built-in support for generating feed exports in multiple formats (JSON, CSV, XML) and storing them in multiple backends (FTP, S3, local filesystem) * An interactive shell console (IPython aware) for trying out the CSS and XPath expressions to scrape data, very useful when writing or debugging your spiders. * Built-in support for selecting and extracting data from HTML/XML sources using extended CSS selectors and XPath expressions, with helper methods to extract using regular expressions. * Portable, Python - written in Python and runs on Linux, Windows, Mac and BSD. * Easily extensible - extensible by design, plug new functionality easily without having to touch the core. It is a Macintosh application that automatically downloads Web. It does this by asynchronously copying the site's webpages, images, PDFs, style sheets, and other files to your local hard drive, duplicating the site's directory structure' and is a website downloader in the file sharing category. * Fast and powerful - write the rules to extract the data and let Scrapy do the rest. Free Download Rick Cranisky SiteSucker full version standalone offline installer for macOS. SiteSucker is described as 'macOS application that automatically downloads websites from the Internet.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed